Developing software for the healthcare industry comes with a unique set of responsibilities, especially when it comes to protecting sensitive patient data. Whether you’re building a mobile app for remote consultations or a web platform for managing medical records, HIPAA compliance is not just a legal obligation, it’s a critical part of earning user trust and safeguarding health information.

In this article, we’ll break down the core HIPAA software compliance requirements for both web and mobile apps, highlight the most common pitfalls, and explain how to build solutions that meet the highest standards of data protection.

What is HIPAA Compliance and Why Does it Matter for Apps?

HIPAA, or the Health Insurance Portability and Accountability Act, is a US law designed to protect the privacy and security of individuals’ health information. Its primary purpose is to ensure that sensitive patient data, known as protected health information (PHI), is properly safeguarded while allowing the access of health information needed to provide high-quality care.

HIPAA applies to covered entities, which include healthcare providers, health plans, and healthcare clearinghouses.

HIPAA also applies to business associates, which are external vendors or service providers, such as software developers, cloud hosts, or billing services that access, process, or store protected health information on behalf of covered entities.

For software developers, HIPAA compliance means building systems that meet strict requirements for data privacy, security, and access control. Whether developing a telehealth app or a patient records’ system, developers must ensure that any software handling PHI adheres to HIPAA rules. While the technical aspects of compliance can be complex, the core goal is always to protect patient data and maintain trust.

HIPAA Compliance Requirements for Software and App Development

HIPAA compliance in software app development means building and maintaining systems that protect sensitive health information throughout its lifecycle. For developers, this involves translating legal requirements into technical safeguards and processes. It includes ensuring secure storage, transmission, and access to protected health information (PHI) within both web and mobile applications.

From a practical standpoint, HIPAA compliance impacts how developers design authentication, encryption, audit logging, and user access features. It also shapes how data is handled in backups, how system vulnerabilities are addressed, and how third-party integrations are managed.

The key HIPAA rules developers must align with consist of the following.

- Privacy rule: this rule governs how PHI can be used and disclosed. For developers, this means building features that allow users to access, edit, and control their health information. It also requires role-based access to limit data visibility to authorized individuals only.

- Security rule: the security rule sets the standards for protecting PHI that is stored or transmitted electronically (ePHI). Developers must implement technical safeguards, such as data encryption, secure authentication, session timeouts, and regular system audits. This rule also requires the app to protect against unauthorized access, breaches, and tampering.

- Enforcement rule: the enforcement rule outlines the procedures for investigations and penalties when HIPAA rules are violated. While not directly a coding task, developers must support features that help organizations prove compliance, such as detailed activity logs and system monitoring.

- Breach notification rule: this rule requires timely notification to affected individuals and authorities if a breach occurs. Developers should build in detection and alert systems that flag suspicious activity and provide audit trails to support quick investigations and transparent reporting.

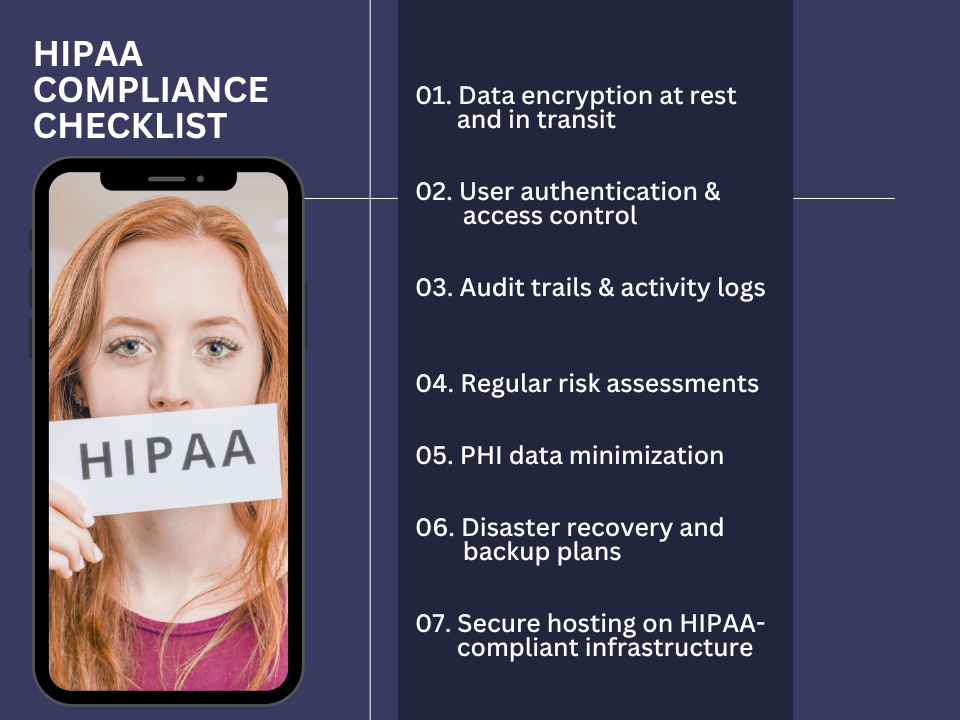

HIPAA Compliance Checklist for Software Development

Building HIPAA-compliant web and mobile applications requires a strategic and structured approach to both software architecture and data handling. Below is a HIPAA compliance checklist designed to help app developers align their applications with HIPAA requirements and avoid costly security failures or legal consequences.

1. Data Encryption at Rest and In Transit

Encrypting PHI is essential to prevent unauthorized access during storage or transmission. All data stored on servers or mobile devices must be encrypted using industry-standard methods, such as AES-256. Similarly, data sent over networks must be protected using secure protocols like TLS 1.2 or higher. Encryption ensures that even if data is intercepted or stolen, it cannot be read without the proper decryption keys.

2. User Authentication and Access Control

Access to PHI must be restricted to authorized personnel only. Developers should implement strong authentication mechanisms, including multi-factor authentication (MFA), secure password requirements, and session timeout features. Role-based access control (RBAC) should also be used to assign permissions based on a user’s job function. This limits exposure of sensitive information and reduces the risk of internal breaches.

3. Audit Trails and Activity Logs

HIPAA requires applications to keep detailed records of who accessed PHI, when, and what actions were taken. Developers should implement logging systems that track login attempts, data access, modifications, and any administrative actions. These logs must be securely stored and monitored for suspicious activity. Regular audits help organizations detect and respond to potential threats quickly.

4. Regular Risk Assessments

Risk assessments are essential for identifying vulnerabilities in the software and overall infrastructure. Developers should work with security teams to perform regular evaluations of their systems. This includes scanning for outdated dependencies, misconfigurations, and other potential risks. Any identified gaps should be addressed promptly through code updates or system improvements.

5. PHI Data Minimization

Apps should collect and store only the minimum amount of PHI necessary for their functionality. Developers should avoid over-collecting sensitive data and implement data retention policies that automatically purge old or unnecessary records. Reducing the amount of PHI stored decreases the potential impact of a breach and simplifies compliance efforts.

6. Disaster Recovery and Backup Plans

In the event of system failure or data loss, the application must be able to recover PHI quickly and securely. Developers should implement automated backup procedures and create disaster recovery plans that include regular testing. Backups must also be encrypted and stored in secure locations to prevent unauthorized access.

7. Secure Hosting on HIPAA-Compliant Infrastructure

Where and how data is hosted is critical to compliance. Developers must ensure the app runs on HIPAA-compliant infrastructure, such as cloud services that offer business associate agreements (BAAs) and implement necessary safeguards. Hosting providers must support data encryption, access controls, intrusion detection, and logging.

HIPAA Compliance in Mobile Apps: Unique Considerations

HIPAA compliance in mobile apps requires careful attention to how data is stored, transmitted, and accessed. One of the key concerns is secure data storage. Developers must decide whether to store protected health information on the device or in the cloud. On-device storage increases the risk of data exposure if the device is lost or stolen, so any local data must be encrypted and protected with strong access controls. Cloud storage can be more secure if hosted on HIPAA-compliant infrastructure with appropriate encryption and access restrictions.

App Transport Security (ATS), a feature in iOS, enforces secure HTTPS connections and helps protect data in transit. Enabling ATS ensures mobile apps use encrypted channels, which is essential for HIPAA compliance.

Additional mobile-specific safeguards include secure push notifications that avoid exposing PHI, session timeouts that log users out after inactivity, and biometric login options, such as fingerprint or face recognition to strengthen access security.

Finally, developers must avoid using SMS or MMS to transmit PHI, as these channels are not secure. Messages can be intercepted or stored by carriers without encryption, putting patient data at risk. Instead, in-app messaging with encryption should be used for any health-related communication.

Does Your App Need to be HIPAA Compliant? 3 Key Questions

To determine if a web or mobile app is HIPAA compliant, companies can start by asking these three key questions:

Is the App Built for a Covered Entity (CE) or Business Associate (BA)?

Understanding who the app is for helps define whether HIPAA applies. If the app is developed for a healthcare provider, health plan, or any third party handling PHI on their behalf, then it must follow HIPAA regulations.

For example, if you're building a patient management app for a hospital, HIPAA compliance is required.

Identify the client’s role to determine your legal obligations.

Does it Collect, Store, or Share PHI?

If the app gathers any health-related data that can be linked to an individual (such as name, medical history, or contact details), it is handling PHI. Even if the app only stores this data temporarily or passes it to another service, HIPAA applies. For instance, an app that tracks medication schedules and links them to patient profiles must be compliant.

Are Proper Safeguards in Place?

HIPAA requires technical protections for data at rest and in transit. Companies should confirm the app uses encryption, secure logins, access controls, and audit logging. A lack of these safeguards indicates non-compliance and high risk.

Vetting an App Developer for HIPAA Compliance

Choosing a HIPAA-aware vendor is critical for any company developing healthcare applications. A vendor that understands HIPAA requirements can help prevent costly data breaches, legal issues, and reputational damage. These vendors build apps with privacy and security in mind from day one, ensuring compliance is not an afterthought.

When selecting a vendor, start by reviewing their portfolio of HIPAA-compliant apps. Experience with similar projects shows they understand the complexities of handling protected health information (PHI).

Next, evaluate their security testing procedures. Vendors should regularly conduct penetration testing and vulnerability assessments to identify and fix weaknesses before threats emerge.

Finally, assess their knowledge of cloud stack compliance. Whether the app is hosted on AWS, Azure, or Google Cloud, the vendor must understand how to configure services securely and use features that meet HIPAA standards, such as encryption, logging, and access control.

Choosing the right partner ensures your app is both functional and compliant.

(Source: https://aloa.co/blog/hipaa-compliant-software-development )

Common HIPAA Pitfalls Developers Should Avoid

Developers working on healthcare apps must be aware of common HIPAA pitfalls that can lead to serious compliance failures. Avoiding these mistakes is essential to protect patient data and meet regulatory standards. Below are some of the most frequent issues developers should watch out for.

- Collecting unnecessary PHI: collecting more protected health information (PHI) than needed increases risk and makes compliance more difficult. For example, if an app only needs a patient’s name and medication list, there is no reason to collect full medical histories or Social Security numbers. Always follow the principle of data minimization by collecting only what is essential for the app’s function.

- Failing to encrypt PHI: one of the most critical safeguards under HIPAA is encryption. Storing or transmitting unencrypted PHI puts patient data at high risk if the system is breached. Developers should use strong encryption methods for both data at rest and data in transit to protect sensitive information.

- Poor documentation or lack of audit trails: HIPAA requires that access to PHI be monitored and traceable. Without proper logs and audit trails, it becomes nearly impossible to detect unauthorized access or respond to investigations. Developers must implement activity logging that records user actions, data access, and changes in the system.

- Not using HIPAA-compliant cloud services: hosting PHI on standard cloud infrastructure without proper safeguards or Business Associate Agreements (BAAs) violates HIPAA. Developers must choose cloud providers like AWS, Azure, or Google Cloud that offer HIPAA-compliant services and sign BAAs to ensure shared responsibility for data protection.

- Not disclosing policies clearly to users: users must understand how their data is used, stored, and protected. Failing to provide transparent privacy policies and terms of service can create legal exposure. Developers should work with legal teams to ensure policies are clearly written and easily accessible.

HIPAA Violations: What’s at Stake?

When HIPAA violations occur, the consequences can be severe for both healthcare providers and their vendors. Financial penalties are issued based on a tiered system that considers the level of negligence. Tier 1 penalties apply when the organization is unaware of the violation and can reach up to $100 per violation. Tier 4, the most serious, involves willful neglect without timely correction and can cost up to $50 000 per violation, with an annual cap of $1.5 million.

Beyond financial loss, reputational damage can be even more sustained. Patients expect their health data to remain private. A breach often results in lost trust, patient attrition, and negative media attention.

Vendors who handle protected health information also face liability. If a third-party developer or service provider contributes to a breach, they can be held accountable under the business associate agreement. HIPAA compliance is not optional, it protects both patients and the businesses that serve them.

For a detailed outline of the violations and tier breakdown, you can click here.

If you’re planning to develop a healthcare app and want to ensure full HIPAA compliance, AppIt is here to help. With years of experience building secure, user-friendly applications for the healthcare industry, our team understands exactly what it takes to meet HIPAA requirements from the ground up. From data encryption to cloud compliance, we’ve done it all. Contact AppIt today to schedule a consultation and take the first step toward building a trusted, compliant healthcare solution.